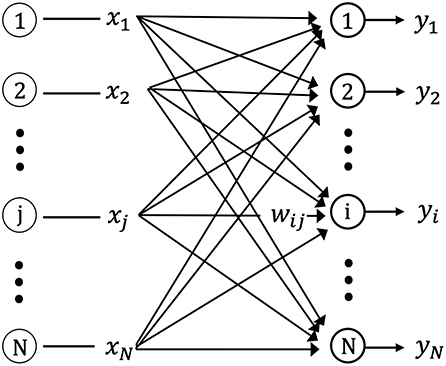

Yet pattern discrimination is an essential part of human-and nonhuman-cognition. Algorithmic identity politics reinstate old forms of social segregation, such as class, race, and gender, through defaults and paradigmatic assumptions about the homophilic nature of connection.Instead of providing a more “objective”basis of decision making, machine-learning algorithms deepen bias and further inscribe inequality into media. By imposing identity on input data, in order to filter-that is, to discriminate-signals from noise, patterns become a highly political issue. How do “human”prejudices reemerge in algorithmic cultures allegedly devised to be blind to them?How do “human”prejudices reemerge in algorithmic cultures allegedly devised to be blind to them? To answer this question, this book investigates a fundamental axiom in computer science: pattern discrimination.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed